OpenAI launched Codex Security, an AI tool for finding vulnerabilities in code.

The company claims that within a month, Codex Security checked more than 1.2 million lines of source code and identified 792 critical vulnerabilities.

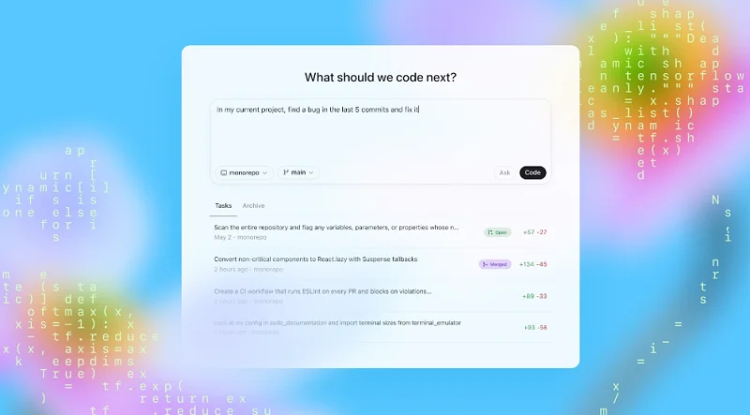

OpenAI has unveiled an AI tool for analyzing source code for vulnerabilities, Codex Security. It can not only detect vulnerabilities but also suggest methods for remediating them. The new tool is currently available in research preview mode for ChatGPT Enterprise, Business, and Edu users.

Codex Security uses the data obtained during repository analysis to create a contextual threat model for a specific project. In the next step, the tool evaluates the impact of the identified threats on the system and, if necessary, tests its theory in an isolated environment. This solution minimizes false positives, allowing the artificial intelligence to focus solely on real problems.

Once Codex Security identifies a potential threat, it offers the user a ready-made patch that takes into account the project's architecture. OpenAI is confident that this approach will allow for the rapid elimination of vulnerabilities and reduce their likelihood of recurring.

Over the past month, Codex Security analyzed more than 1.2 million lines of code, identifying 792 critical vulnerabilities and more than 10,000 serious security issues.

The tool's availability to corporate clients will be expanded in the foreseeable future. OpenAI is offering the first month of use free of charge.

Share

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0

![Transfer/ Postings Senior Superintendent Police Hyderabad [Notifications]](https://pakweb.pro/uploads/images/202402/image_100x75_65d7bb0f85d5f.jpg)

![Amazing Text Animation Effect In CSS - [CODE]](https://pakweb.pro/uploads/images/202402/image_100x75_65d79dabc193a.jpg)